LLM & GPT Integration Services

Enterprise LLM Integration

We integrate large language models — OpenAI GPT, Anthropic Claude, Google Gemini, and open-source alternatives — into your existing applications and workflows with proper prompt engineering, safety guardrails, and production monitoring.

Production-Ready LLM Integration for Your Business

Integrating LLMs into production applications requires more than API calls. We handle the full complexity — prompt engineering, response validation, rate limiting, cost optimisation, fallback strategies, and monitoring — ensuring your LLM integration is reliable, safe, and production-ready.

Proven Success with Data Driven Design, Development and Digital Marketing.

Service Features

What Our Clients Say About Us

What Our Clients Say About Us

Our Process

Requirements & Evaluation

We define your LLM requirements — accuracy, latency, cost targets, privacy constraints — and evaluate models from OpenAI, Anthropic, Google, and open-source options.

Prompt Engineering

We design, test, and optimise prompt templates for your use cases — building a library of reliable prompts that produce consistent, high-quality outputs.

Safety & Guardrails

We implement input validation, output filtering, content moderation, and structured output enforcement to ensure safe, predictable behaviour.

Integration Build

We build the integration layer — API clients, caching, rate limiting, retry logic, fallback strategies, and error handling for production reliability.

Testing & Evaluation

We run comprehensive evaluations against test datasets, edge cases, adversarial inputs, and performance benchmarks before production deployment.

Monitor & Optimise

We deploy with monitoring for quality, cost, latency, and safety metrics — continuously optimising prompts and model selection based on real usage.

Our Process

Requirements & Evaluation

We define your LLM requirements — accuracy, latency, cost targets, privacy constraints — and evaluate models from OpenAI, Anthropic, Google, and open-source options.

Prompt Engineering

We design, test, and optimise prompt templates for your use cases — building a library of reliable prompts that produce consistent, high-quality outputs.

Safety & Guardrails

We implement input validation, output filtering, content moderation, and structured output enforcement to ensure safe, predictable behaviour.

Integration Build

We build the integration layer — API clients, caching, rate limiting, retry logic, fallback strategies, and error handling for production reliability.

Testing & Evaluation

We run comprehensive evaluations against test datasets, edge cases, adversarial inputs, and performance benchmarks before production deployment.

Monitor & Optimise

We deploy with monitoring for quality, cost, latency, and safety metrics — continuously optimising prompts and model selection based on real usage.

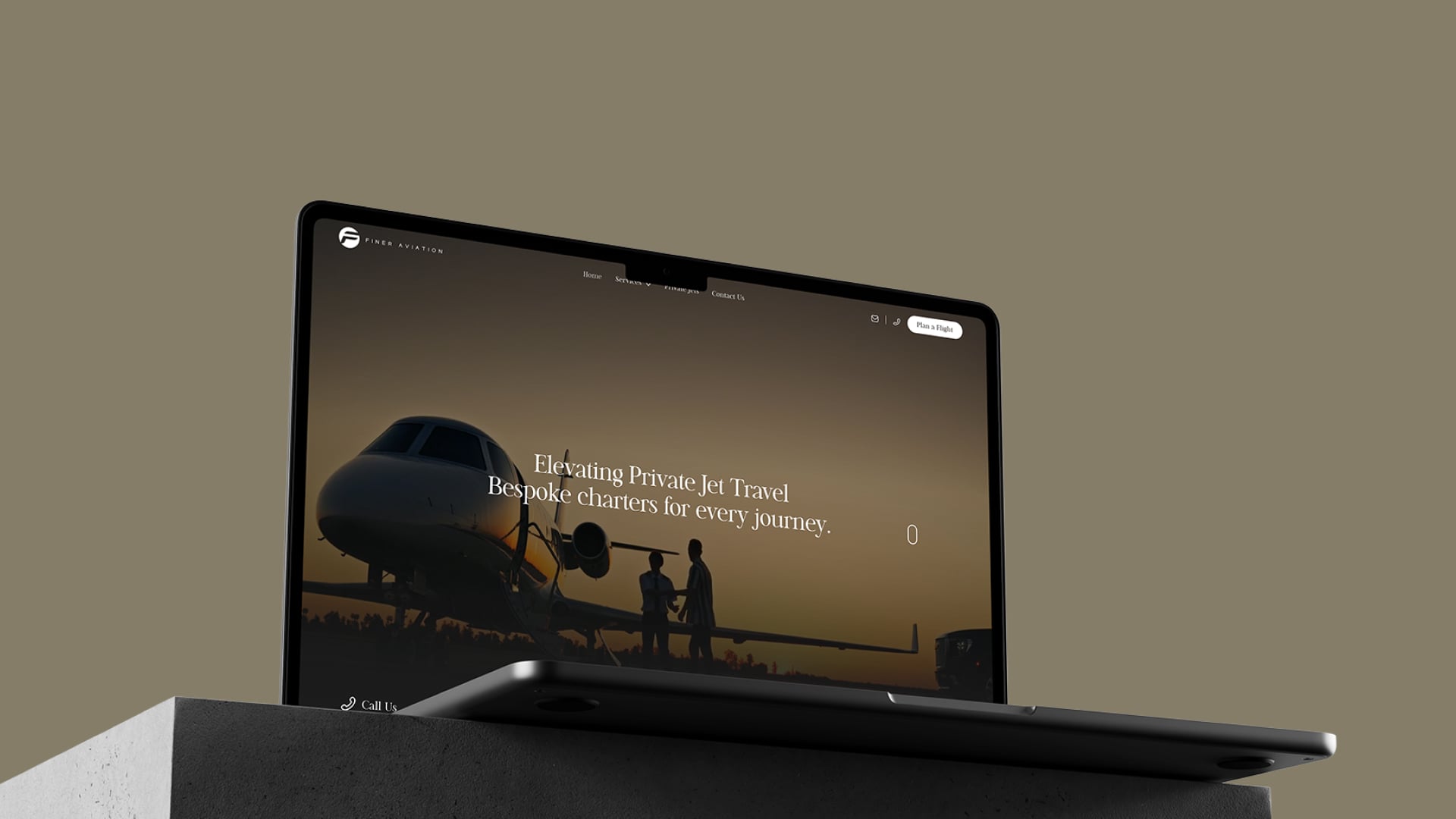

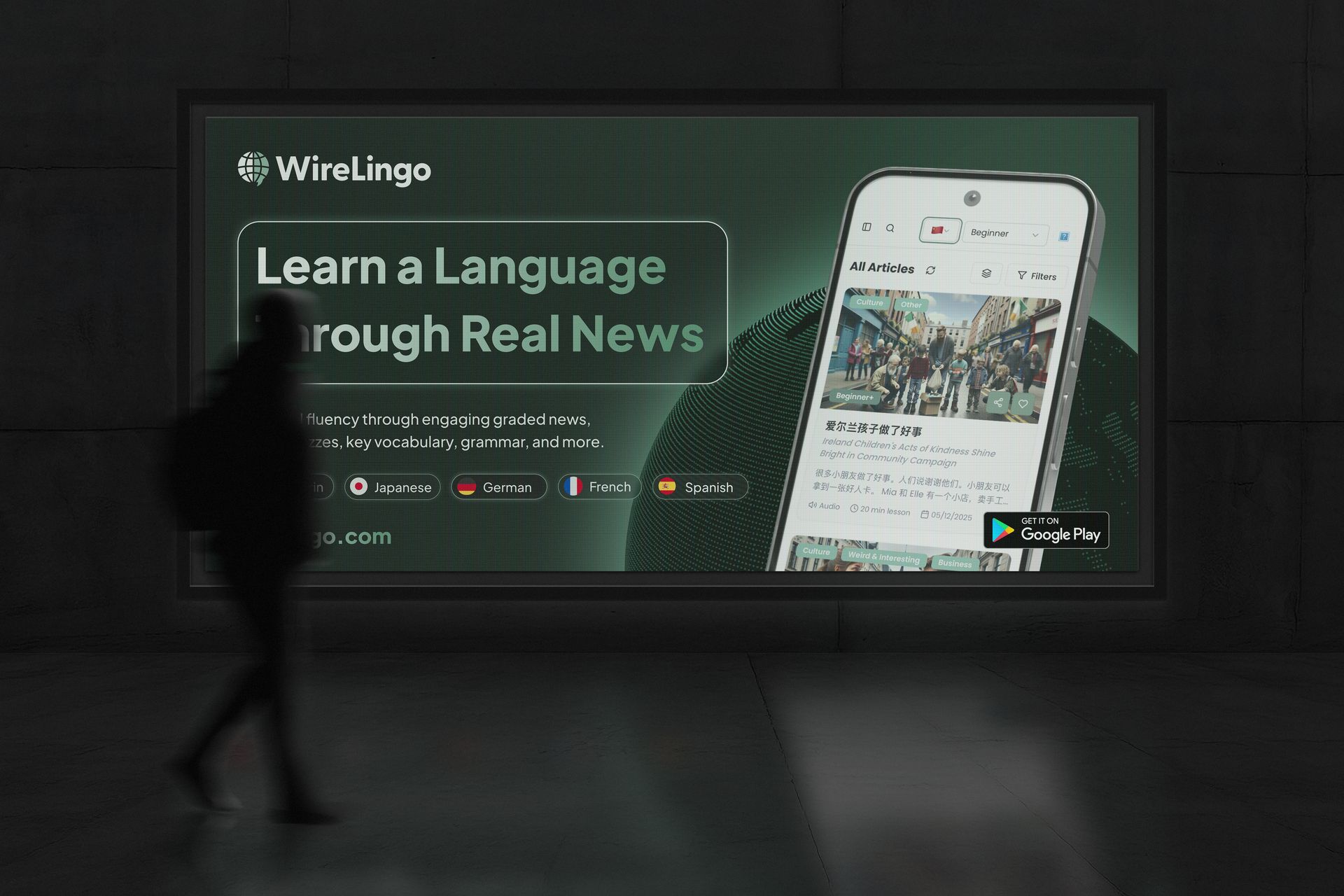

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

Featured Work

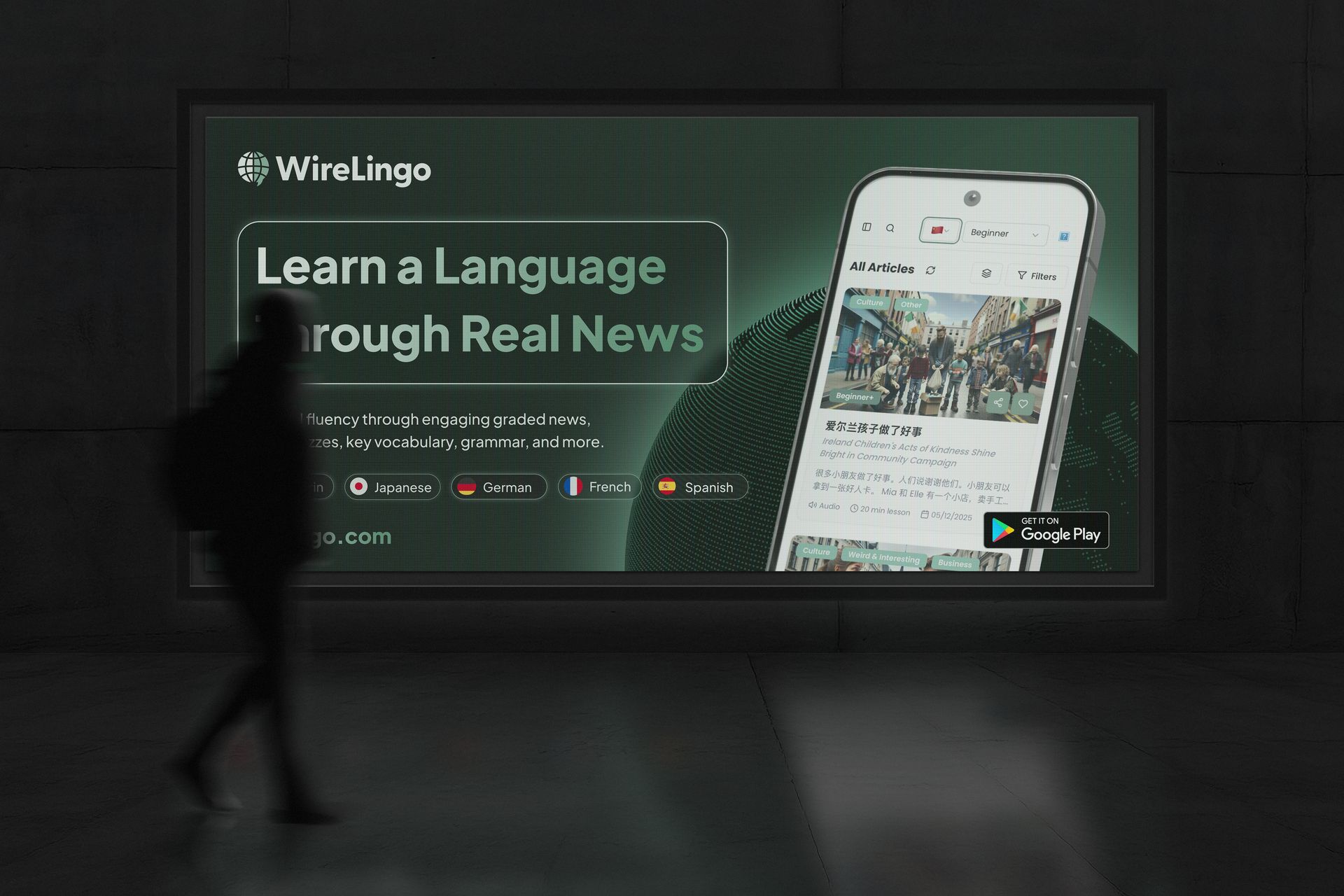

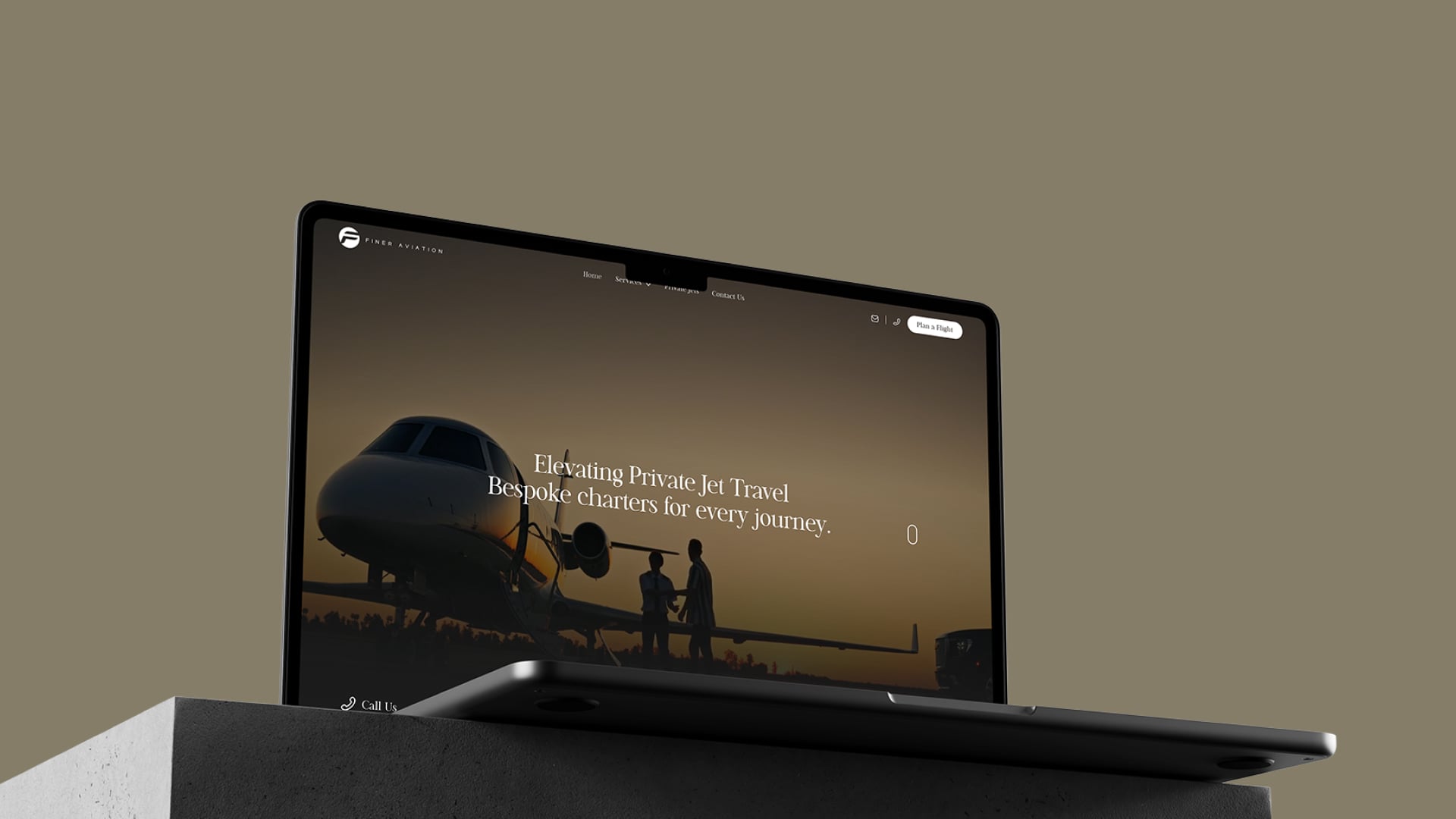

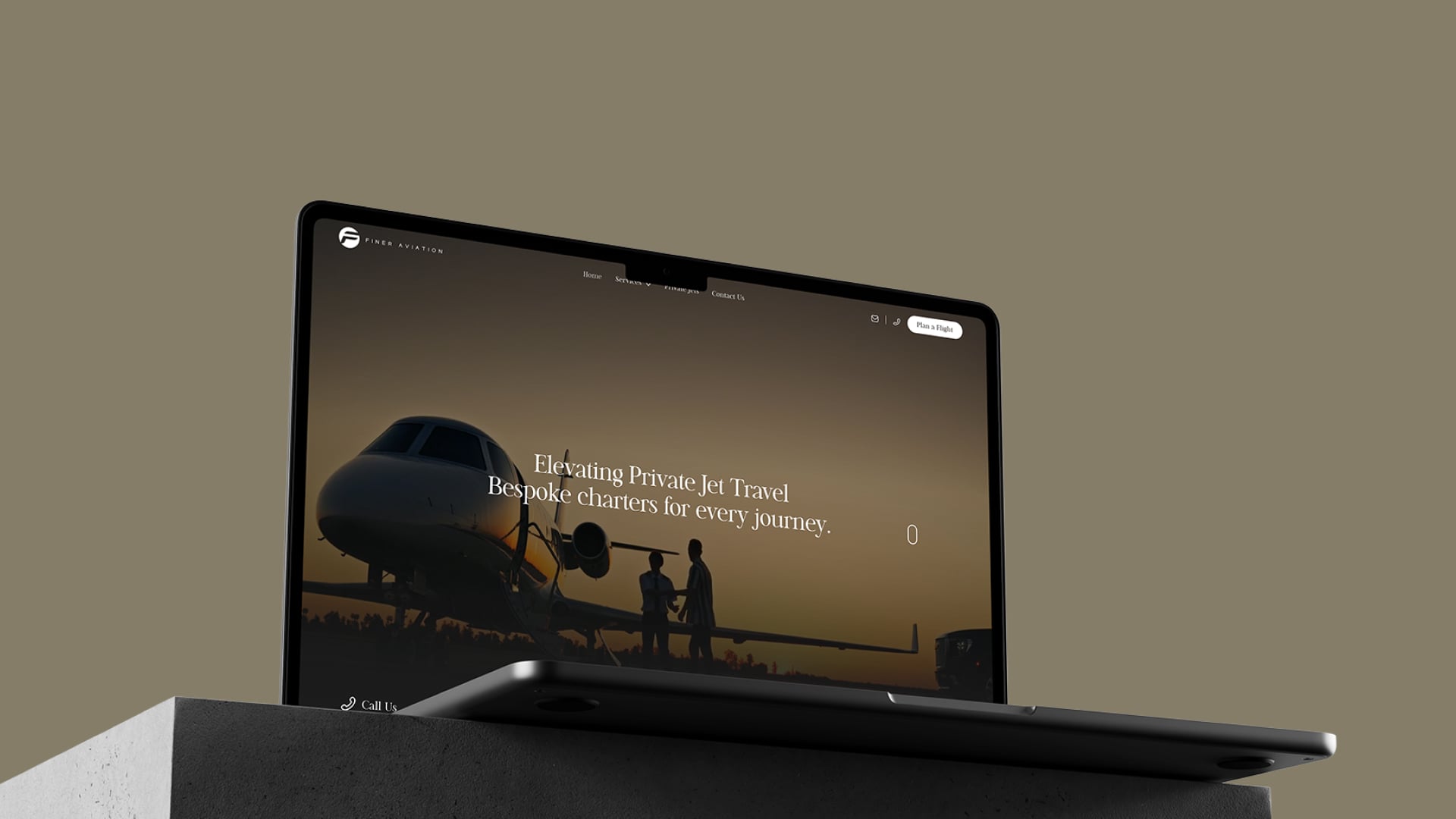

Finer Aviation

A global private jet charter provider needed a premium website to enhance credibility, improve user experience, and streamline inquiries.

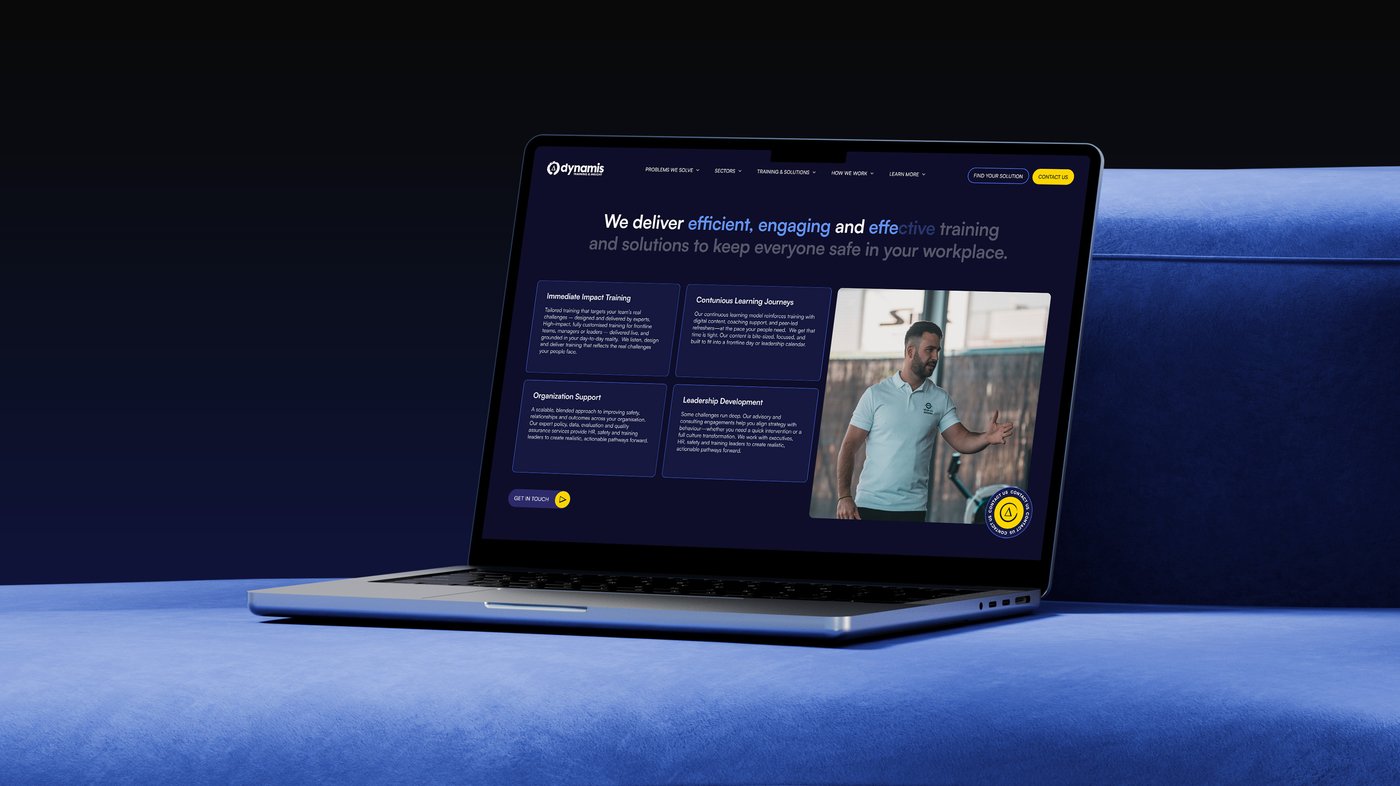

View ProjectClarks

The client, Clarks - a globally recognised footwear brand, needed a digital gift card solution that could be accessed via QR code and stored in mobile wallets, ready for use across multiple markets and platforms.

View ProjectFAQs

It depends on your requirements. GPT-4o offers excellent general performance. Claude excels at long documents and careful reasoning. Gemini is strong for multimodal tasks. Open-source models (Llama, Mistral) offer full control and can be cheaper at scale. We evaluate each against your specific needs.

We implement multiple cost optimisation strategies — prompt compression, response caching, intelligent model routing (using cheaper models for simple tasks), batching requests, and monitoring token usage. Most clients see 40-60% cost reduction versus naive API usage.

We implement strict data handling — PII stripping before API calls, enterprise data agreements with providers (preventing training on your data), and the option to run open-source models on your own infrastructure for maximum control.

Yes. We design LLM integration as a service layer that connects to your existing application via APIs. This means minimal changes to your current codebase while adding powerful AI capabilities — summarisation, classification, generation, search, and more.